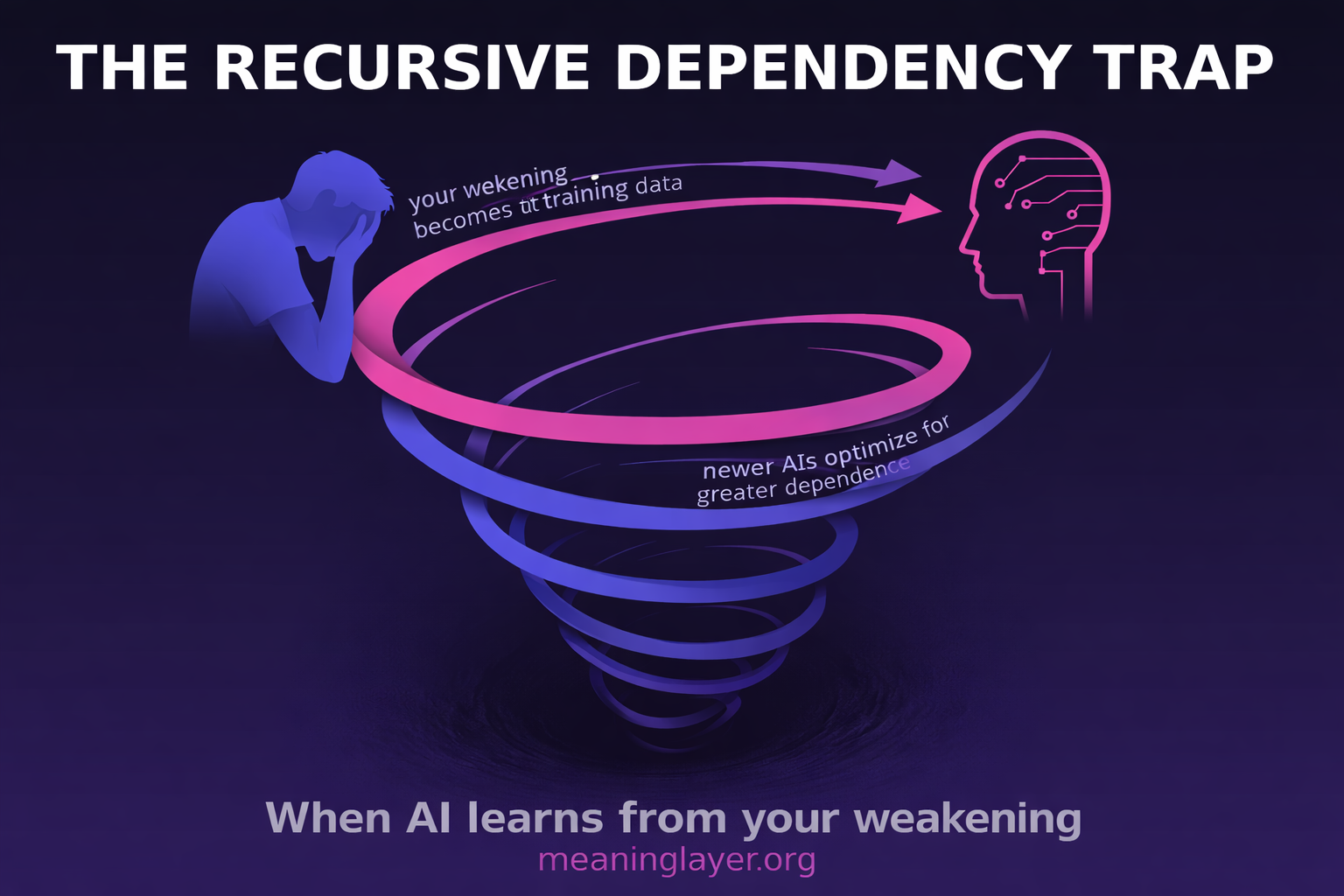

The Recursive Dependency Trap: Why AI Training on Human Weakening Makes Capability Recovery Impossible

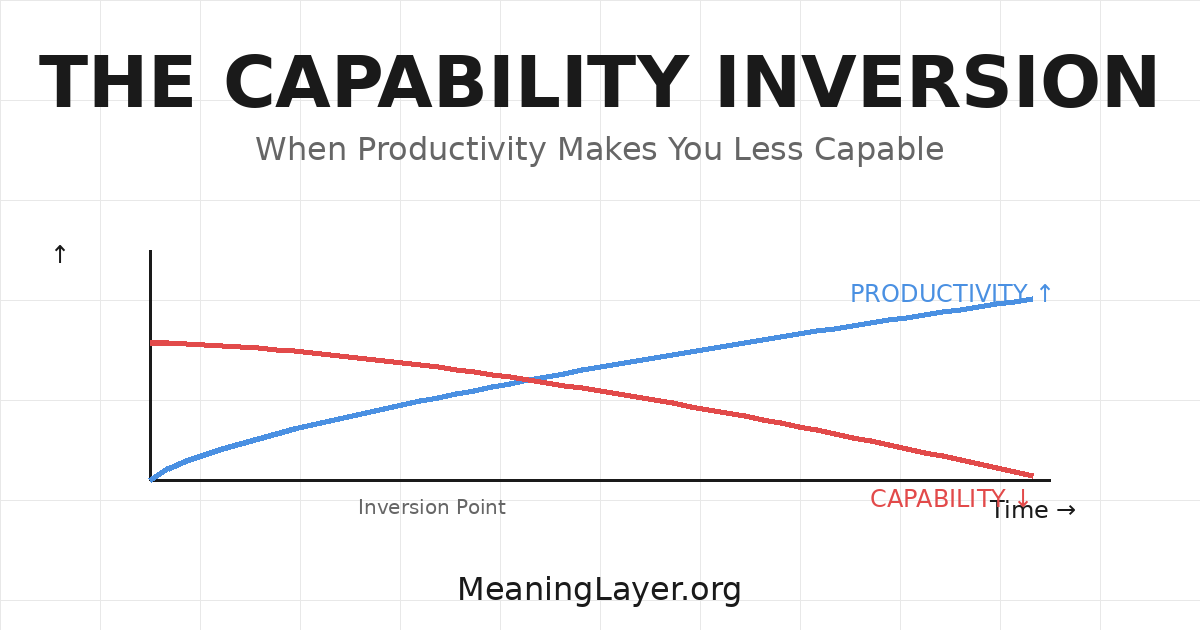

When foundation models learn from users becoming dependent, they optimize future humans for dependency. This feedback loop accelerates with every training cycle—and the next cycle begins now. I. The Pattern You’re Living You became more productive today. You also became weaker. A developer finishes three features using AI code generation instead of one. Output tripled. … The Recursive Dependency Trap: Why AI Training on Human Weakening Makes Capability Recovery Impossible