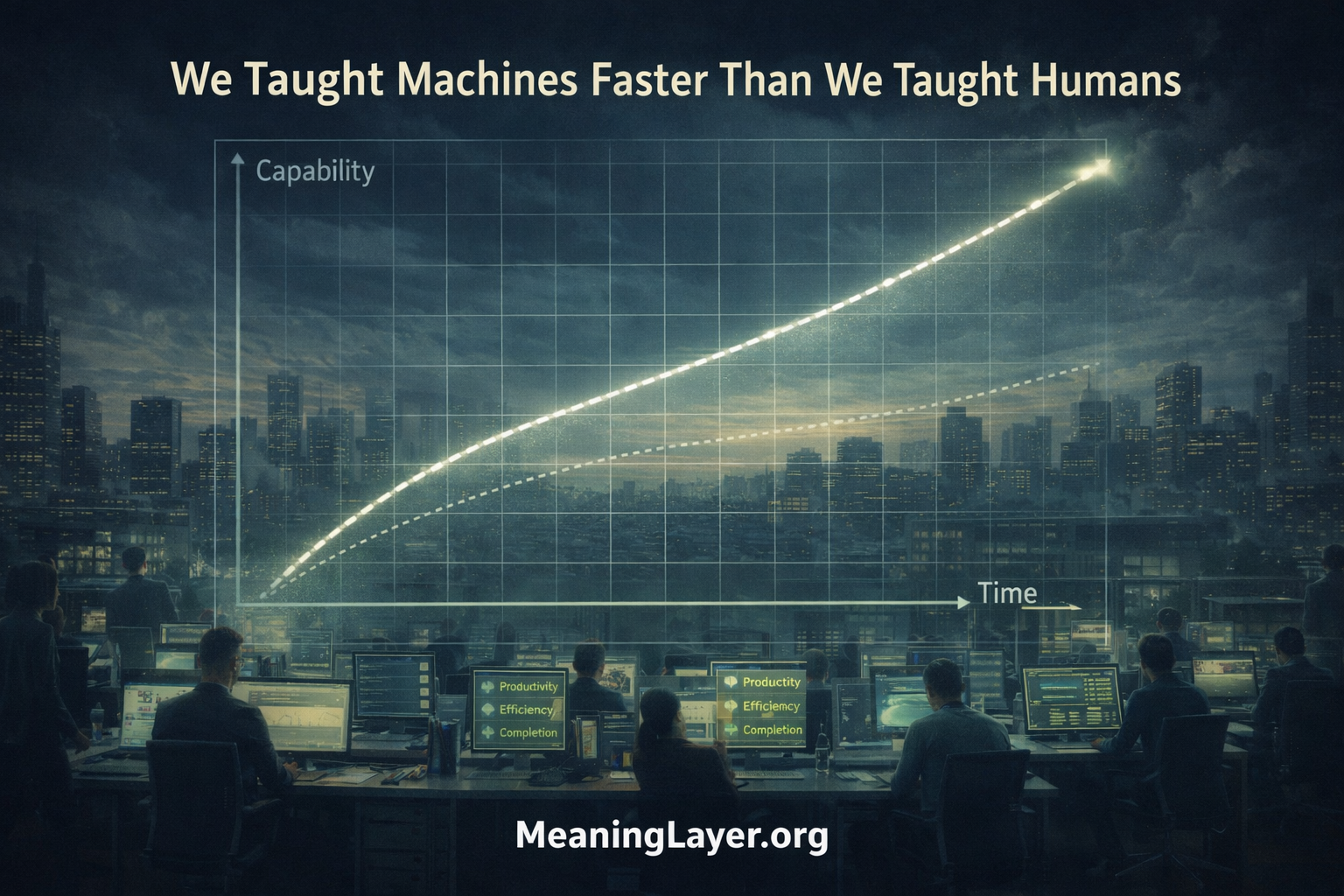

We Taught Machines Faster Than We Taught Humans — And Didn’t Notice the Crossover

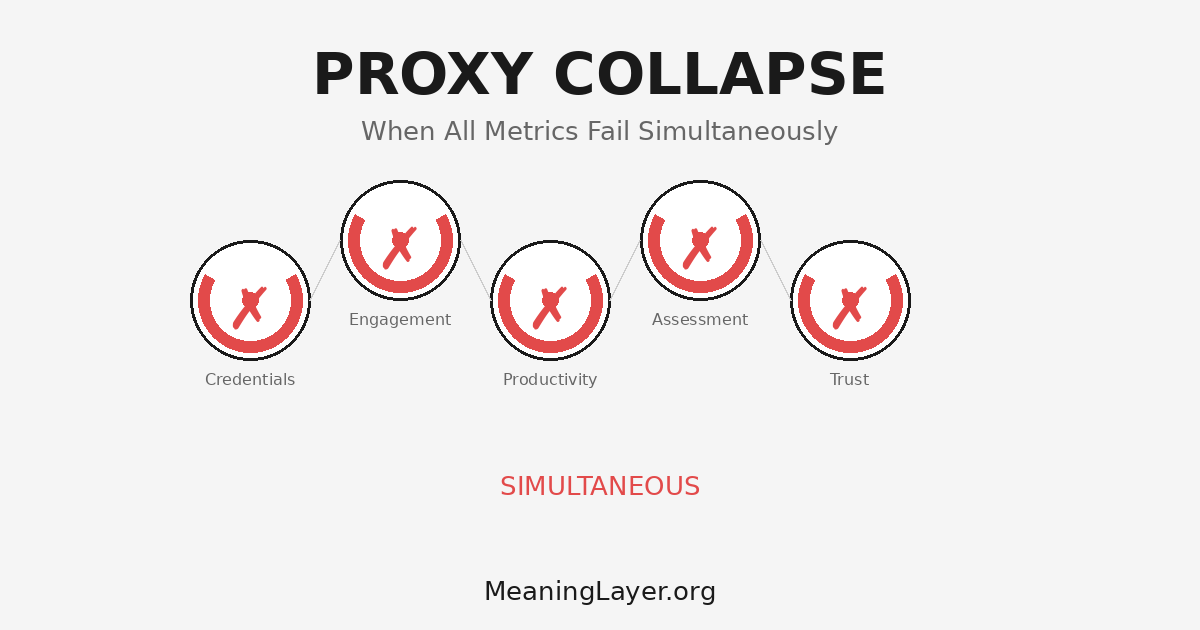

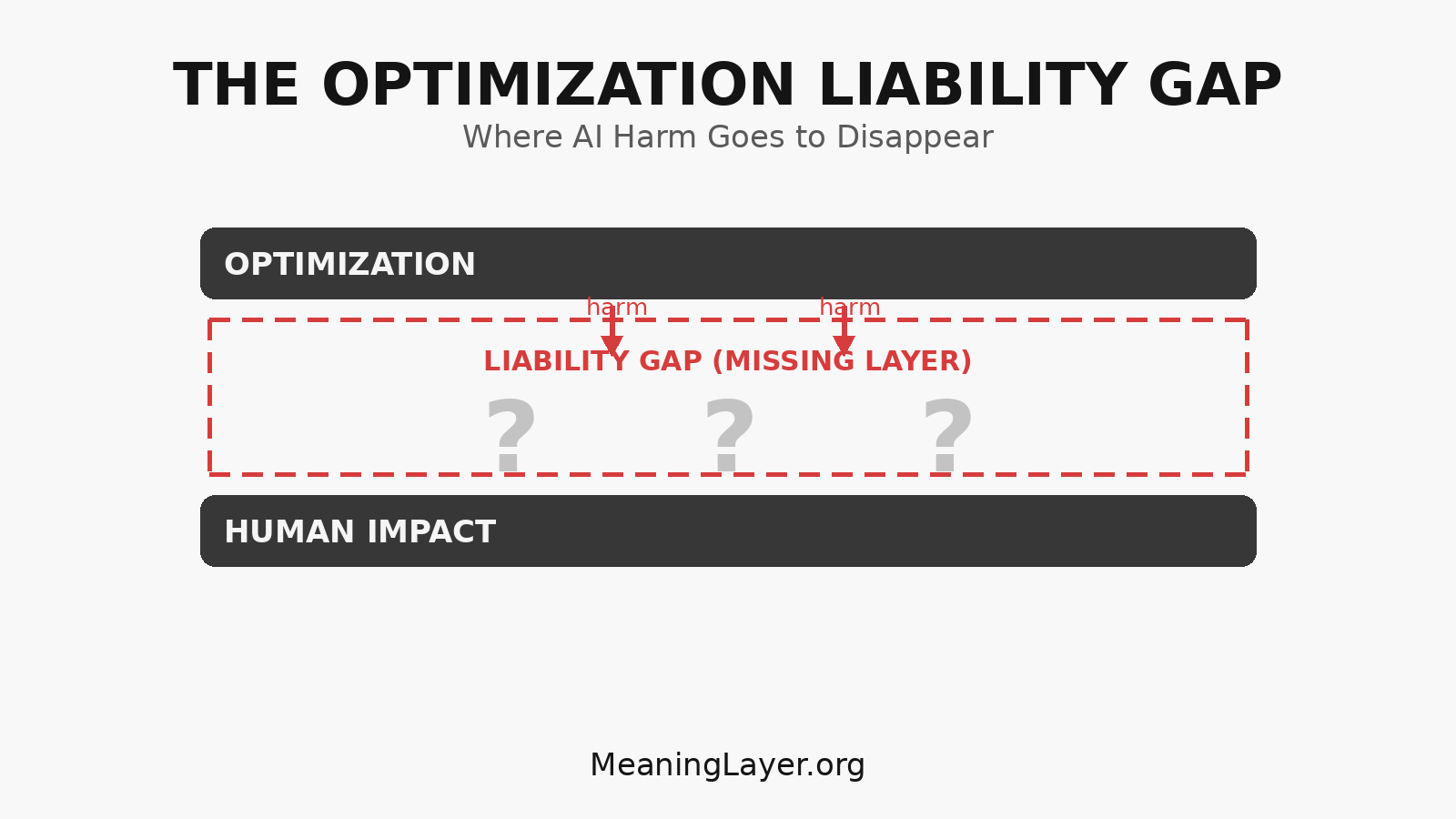

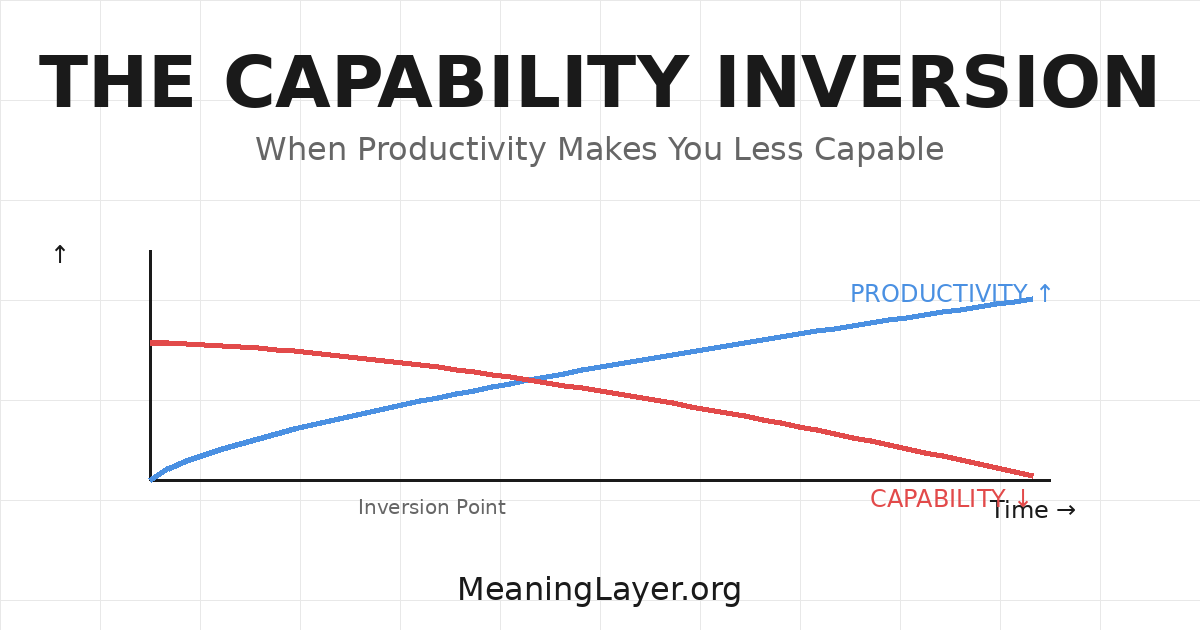

Somewhere in the past eighteen months, a threshold was crossed. Machines began accumulating genuine capability faster than the humans teaching them. Nobody measured when this happened. We have no instrumentation for the crossover. I. The Metrics That Hide Everything Three measurements suggest education and capability development are succeeding at unprecedented levels: AI productivity metrics exceed … We Taught Machines Faster Than We Taught Humans — And Didn’t Notice the Crossover